Your AI Agent Config is Technical Debt You Haven't Acknowledged Yet

Table Of Contents

Let’s talk about the junk drawer of your AI setup.

Your AI agent configuration has a problem. You know it does. That slash command you wrote three months ago and never touched again (even Armin Ronacher admits to this). The 17 MCP servers you installed because someone on Twitter said they were game-changers. The subagent configuration you copied from a blog post that was already outdated when you found it.

We’ve all been there. And we’re all still there.

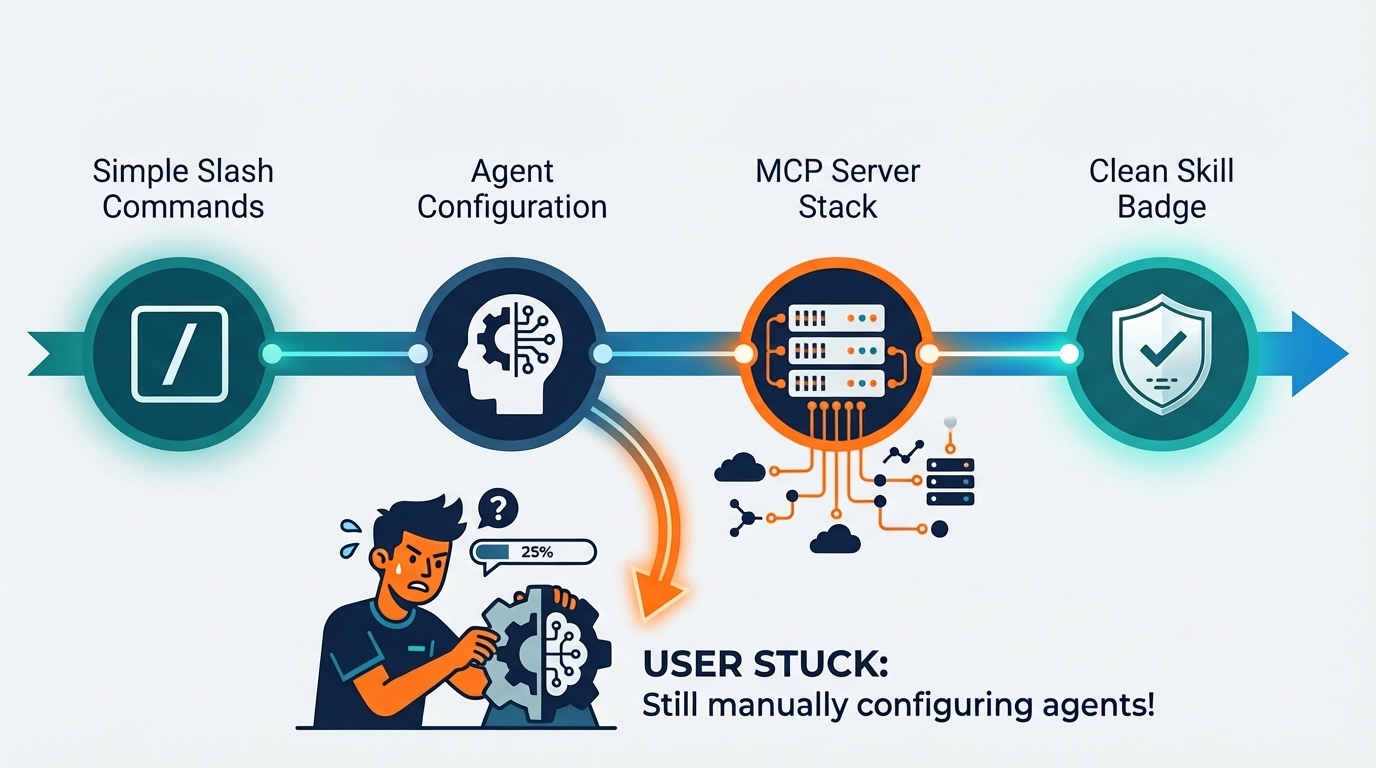

The evolution we didn’t keep up with

The evolution of AI agent configurations - from slash commands to skills

The Claude Code ecosystem is an evolving beast. It started with

slash commands—simple

enough, right? Write a prompt, invoke it with a /, get stuff done. Then came

agents,

and the world quickly adopted them. Then we understood the problems.

Meanwhile, the agentic capability evolved. Now the model can seamlessly switch between tool calls, thinking, and answering—a capability that didn’t exist when you wrote that first prompt. Consequently, the world started creating and using MCP servers, and influential folks like IndyDevDan (a big fan) explained why prompts on MCP servers are game-changers.

Then you realize your 17 MCP servers are eating half of your context window.

While you scrambled for workarounds, Claude gave us skills. A better abstraction. A cleaner way to organize capabilities. The ecosystem moved forward.

But did we?

The rot: our agent configuration hasn’t changed

Here’s the uncomfortable truth: we’re still running that slash command from the Claude 3.7 era. The one we wrote when the model needed more hand-holding. The one that over-specifies things the model now understands implicitly.

Or maybe we have the same config structure—slash command, MCP server, and subagent—all doing overlapping things. Your AI agent configuration hasn’t changed, but the model has. And then we wonder why our outputs feel off.

As a result, our reports look like slop that even AI is tired of reading. Nothing happens in terms of actual code. We thought our subagent would do the searching, but somehow it doesn’t work the way we imagined.

Our workflow is buggy. Instead of focusing on the task, we’re spending more mental energy on what to invoke rather than what to pass. We’re fighting our own infrastructure instead of shipping.

The explorer’s dilemma

And then, life happens.

Maybe you temporarily need to move to Codex. Or maybe you’re one of those sailors from Columbus or Amerigo Vespucci’s crew in a past life, and you can’t resist exploring every new coding agent that pops up.

So you try something new. And you either:

- Spend hours converting your configs to the new format

- Just run a raw prompt and discard the tool because “it doesn’t work as well”

The second one happens more often, doesn’t it?

Or worse—there’s an agent everyone’s going crazy about. The one that’s supposedly miles ahead. And you can’t move to it because that agent only understands TOML, while you (and everyone and their mom) have your MCP servers configured in some flavor of JSON.1

The config format becomes the friction. Not the capability. The config.

The confession: I paid this debt

Using commands and agents, I once tried to build an entire LLM team. The dream setup:

PM → Architect → Planner → Backend Worker → QA → Frontend Worker → QA → Release

And me at every step, orchestrating, reviewing, approving.

This was me mimicking the best team and flow I ever had. A real team. The one that shipped efficiently, communicated well, knew their roles. But here’s the thing—that team ended six months before ChatGPT even launched. I was trying to recreate 2022 with 2024 tools.

What I got: for a simple fullstack change—one CRUD API and a simple page—the number of reports it generated was 10-13. Ten to thirteen reports. For a CRUD endpoint.

I spent more than a week on this. Then I came back to my original prompt. Tweaked it to identify and adjust to complexity levels. Tweaked it to expect more iteration during planning, less handoff overhead.

The result? Even my most complex feature (admin-gating an MCP server) now has max 4 reports. One of them is just two commits in a txt file. Sometimes 5 when I ask for a walkthrough.

From 13 reports to 4. Same tools. Better configuration. (I wrote more about comparing different AI agents and how configuration matters as much as capability.)

The turn

I went to debug, rework, and tweak my system. A week of my life for one workflow.

But I did it.

What if you forgot to? What if you’re still running that 3.7-era prompt? Still invoking that subagent that doesn’t fit how the model thinks anymore?

However, we’re too busy to update what we wrote earlier. There’s always a feature to ship, a bug to fix, a meeting to attend. The config can wait.

And just like code debt—that shortcut we had to take, the one that comes back to bite us later—we have agent config debt. Your AI agent configuration becomes a liability rather than an asset.

It’s the prompt that assumed the model couldn’t think for itself.

It’s the subagent that duplicates what the model now does natively.

It’s the 17 MCP servers when you really need 5.

It’s the workflow designed for a model version that no longer exists.

The mirror

Technical debt in code is something we acknowledge. We track it. We schedule time to pay it down. We talk about it in standups.

Agent config debt? We pretend it doesn’t exist.

But it’s there. In the extra tokens we burn. In the confused outputs we debug. In the workflows that feel heavier than they should. In the friction we’ve normalized.

The ecosystem has moved. The models have evolved. The abstractions have improved. (If you’re curious about my broader lessons from LLM development, that’s where this realization started.)

When’s the last time you actually read your config file?

May the force be with you…

Ready to pay down your agent config debt? Start by reading your CLAUDE.md, your slash commands, your MCP server configs. Ask yourself: does this still make sense for how the model works today? If you’ve done a config audit recently—or discovered your own debt—I’d love to hear about it. The usual channels work.

This is why converting between different config formats isn’t just nice-to-have—it’s becoming a necessity for anyone who wants to actually try new tools. ↩︎